Federated Learning Meets LLMs: Privacy-Preserving AI Without Centralizing Data

How federated learning techniques are being adapted for large language models, enabling organizations to collaboratively improve AI without sharing sensitive data.

Explore large language model architectures, fine-tuning strategies, prompt engineering, and how LLMs power modern AI applications.

Showing 50 of 50 articles

How federated learning techniques are being adapted for large language models, enabling organizations to collaboratively improve AI without sharing sensitive data.

A practical guide to LLM compression — quantization, pruning, distillation, and speculative decoding — with benchmarks showing quality-cost tradeoffs for production deployment.

Google DeepMind releases Gemini 3.1 Pro with a 1M-token context window, 77.1% on ARC-AGI-2, and multimodal reasoning across text, images, audio, video, and code — its strongest Pro-tier model ever.

OpenAI's Structured Outputs guarantee valid JSON responses matching your schema. How it works, migration from function calling, and patterns for production type-safe AI applications.

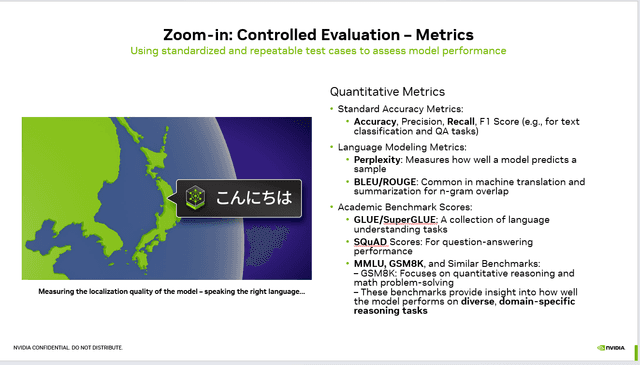

A clear guide to the major LLM benchmarks used to evaluate model capabilities in 2026, including what they measure, their limitations, and how to interpret results.

How to keep production LLM applications current — from RAG-based knowledge updates and fine-tuning cadences to model migration strategies and regression testing.

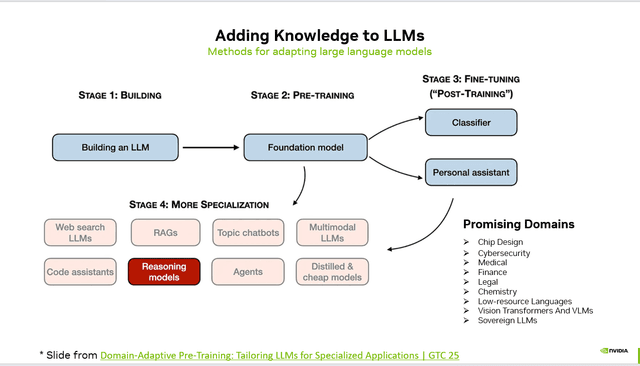

Adding Knowledge to LLMs: Methods for Adapting Large Language Models

How to build production-grade data pipelines that use LLMs to extract structured data from unstructured sources with validation, error handling, and quality monitoring.

Practical architectures for using LLMs to extract structured data from unstructured documents, covering schema design, chunking strategies, and production reliability patterns.

Technical comparison of emerging transformer alternatives including Mamba's selective state spaces, RWKV's linear attention, and hybrid architectures that combine the best of both worlds.

A detailed cost comparison of self-hosting open-source LLMs versus using closed API providers, covering infrastructure, engineering, quality, and hidden costs.

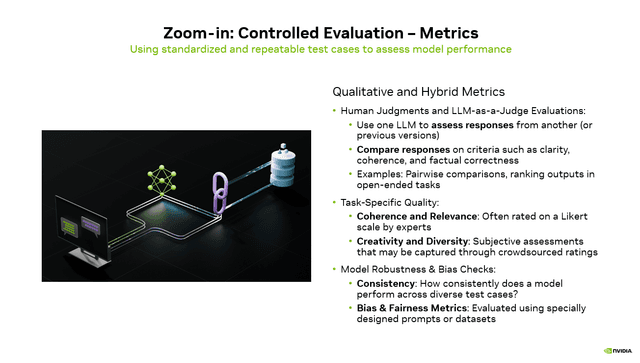

Human Judgments and LLM-as-a-Judge Evaluations for LLM

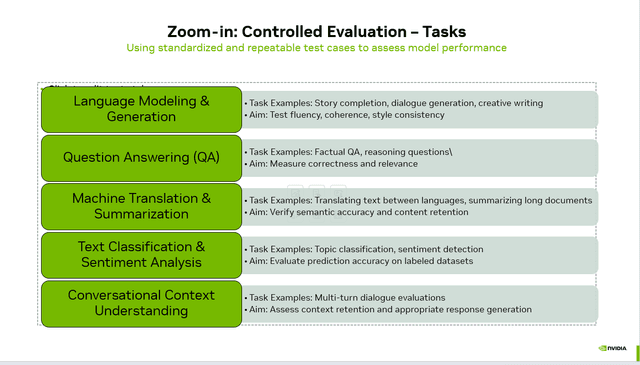

Standardized Test Cases to Assess AI Model Performance

How Do You Really Know If Your LLM Is Good Enough? A Guide to Controlled Evaluation Metrics

A technical primer on how reasoning models work — from basic chain-of-thought prompting to OpenAI's o3 and DeepSeek R1. Understanding the inference-time compute revolution.

Practical techniques to reduce LLM inference costs by 40-80 percent through prompt caching, semantic caching, and KV cache optimization in production systems.

Assessing LLM Performance: Strategies to Evaluate and Improve Your App.

A practical 6-step framework for selecting the best large language model for your application based on performance, cost, latency, and business requirements.

Learn the three critical LLM evaluation methods — controlled, human-centered, and field evaluation — that separate production-ready AI systems from demos.

How combining knowledge graphs with LLMs enables structured reasoning that overcomes hallucination, improves factual accuracy, and unlocks complex multi-hop question answering.

Explore how small language models (1-7B parameters) are closing the gap with frontier models for production use cases — from Phi-4 to Gemma 2 and Mistral Small.

The RAG vs fine-tuning debate continues to evolve. A clear framework for deciding when to use retrieval-augmented generation, when to fine-tune, and when to combine both.

Move beyond simple accuracy metrics for LLM evaluation. Learn to measure usefulness, safety, cost-efficiency, latency, and user satisfaction — the metrics that predict production success.

A technical deep dive into how modern LLM tokenizers work, the tradeoffs between BPE and SentencePiece, and emerging approaches that improve multilingual and code performance.

How teams are using large language models to generate high-quality synthetic training data, covering self-instruct, evol-instruct, persona-driven generation, and quality filtering.

A comprehensive comparison of embedding models in 2026 — benchmarking OpenAI text-embedding-3, Cohere embed-v4, Voyage AI, and open-source alternatives across performance, cost, and use cases.

Learn how LLM routing systems dynamically select the optimal model for each request based on complexity, cost, and latency — saving up to 70% on inference costs without sacrificing quality.

Examine the evolving debate around compute scaling laws — whether the Chinchilla ratios still hold, the rise of inference-time compute, and what the latest research says about model scaling.

DeepSeek V3 emerges as a formidable open-source contender from China, matching frontier model performance at unprecedented training efficiency. Technical deep dive into architecture and implications.

A practical guide to fine-tuning large language models for specialized domains including data preparation, training strategies, evaluation, and when fine-tuning beats prompting.

Microsoft's Phi-4 proves that data quality trumps model size. A 14B parameter model beating GPT-4o on math benchmarks signals a shift in how we think about AI scaling.

Battle-tested strategies for reducing and managing LLM hallucinations in production, from retrieval grounding and structured outputs to confidence calibration and human-in-the-loop patterns.

Meta releases Llama 3.3 70B, matching the performance of its own 405B model at a fraction of the cost. Why this changes the calculus for enterprises choosing between open and closed models.

How the rapid expansion of LLM context windows from 4K to over 2 million tokens is reshaping application architectures, with analysis of performance tradeoffs and practical implications.

Anthropic's updated Claude 3.5 Sonnet and new Claude 3.5 Haiku deliver meaningful improvements in coding, instruction following, and tool use. A production-focused analysis.

Track the evolution of reinforcement learning from human feedback — how DPO, RLAIF, KTO, and constitutional approaches are replacing traditional PPO-based RLHF pipelines.

A deep dive into structured output techniques for LLMs — from JSON mode and function calling to constrained decoding with Outlines and grammar-guided generation.

Google's Gemini 2.0 Flash and Thinking models deliver competitive reasoning with dramatically lower latency. A deep dive into architecture, benchmarks, and multimodal capabilities.

OpenAI's o3 model redefines AI reasoning with unprecedented scores on ARC-AGI, GPQA, and competitive math benchmarks. Here is what it means for developers and enterprises.

An in-depth look at Mixture of Experts (MoE) architecture, explaining how sparse activation enables trillion-parameter models to run efficiently and why every major lab has adopted it.

Deep dive into the data curation and quality filtering techniques that determine LLM performance — from deduplication to classifier-based filtering and data mixing strategies.

A deep technical walkthrough of how large language models invoke external tools via function calling, covering token-level mechanics, schema injection, and reliability patterns.

When LLMs crash during long conversations, the culprit is often the KV cache, not GPU vRAM. Learn the tiered memory management strategy that scales LLM inference.

ByteDance's Seed-OSS-36B-Instruct brings 512K context, Apache 2.0 licensing, and a unique thinking budget feature. A deep dive into the model that challenges proprietary LLMs.

OpenAI released GPT-OSS, open-weight models with 120B and 21B parameters under Apache 2.0 licensing. Learn about the architecture, capabilities, and what this means for AI development.

LLM reasoning enables AI agents to solve complex problems through chain-of-thought, ReAct, and self-reflection techniques. Learn how reasoning scales test-time compute for better results.

Reinforcement Learning from Human Feedback (RLHF) aligns LLMs with human values through three training stages. Learn how RLHF works, why it matters, and how it produces better AI.

Eight practical strategies for improving LLM prompt consistency — from prompt decomposition and few-shot examples to temperature tuning and output format specification.

A comprehensive glossary of LLM terminology covering core concepts, training, fine-tuning, RAG, inference, evaluation, and deployment. Essential reference for AI practitioners.

A technical overview of GPT-4's transformer architecture, pre-training approach, multimodal capabilities, and practical applications for developers and businesses.